Blog Post About the Energy Use of GenAI

Summary

Environmental sustainability is an important issue to me and I’ve tried to incorporate it into my work as a UX designer. One way of supporting sustainability in my role is through raising awareness of Avanade’s work as one of the steering members of the Green Software Foundation, which is an organization that sets standards and shares best practices for building software that is more energy efficient.

When a hybrid expert panel was held at Accenture’s offices, where Avanade is co-located, I took the opportunity to draft a blog post in order to share key points from the discussion with a wider audience. The blog was published on Avanade’s external- and internal-facing platform, Avande Insights, which is used to support Avanade’s sales efforts and position the company as a thought leader within the tech industry. The following blog post focuses on carbon reduction strategies for generative AI.

Two Strategies to Reduce the Carbon Footprint of GenAI

Introduction

Typing a prompt into a generative AI tool like ChatGPT and receiving fully formed answer can feel like magic. But behind the scenes there’s a tremendous amount of energy and physical resources – being used at a data center somewhere in the world – that makes this experience possible.

The carbon footprint associated with any software is imperceptible, but managing it is becoming particularly important as GenAI grows in popularity. Increasingly, Avanade is offering co-pilots and other services that rely on large language models to clients – these offer great benefits to end users, but they are also energy-intensive. Going forward, the computing power needed to run GenAI is only expected to increase: Gartner predicts that by 2025, AI will consume more energy than the human workforce.

The steps that we can take to mitigate the energy-intensiveness of software, including GenAI, was the topic of a recent event at Accenture’s New York offices, where Avanade is co-located.

The panel, “Building, Deploying, and Measuring Energy-Efficient Digital Products,” featured experts from Accenture, Microsoft, Google, and Electricity Maps. All these companies, along with Avanade, are members of the Green Software Foundation, which has created standards and best practices for developing energy-efficient software.

While the panel covered many topics related to reducing the amount of power and hardware needed to run digital applications, the conversation inevitably turned to GenAI, in part because large language models (LLMs) are particularly energy intensive. In the conversation, the panelists highlighted two strategies, spatial shifting and temporal shifting, that are particularly effective in reducing the amount of energy needed to run these models. Here’s a brief primer on what these terms mean in practice.

Spatial Shifting

When you plug in your laptop or any device to your wall outlet, the energy you’re using can either come from renewable sources (such as solar, wind, and hydro) or polluting ones (like coal or gas), depending on where you are in the world. Some places are much further along in their transition to clean power than others, so it makes sense to run energy-intensive workloads like LLMs in data centers in states or countries that have a greater proportion of renewable energy powering their grid.

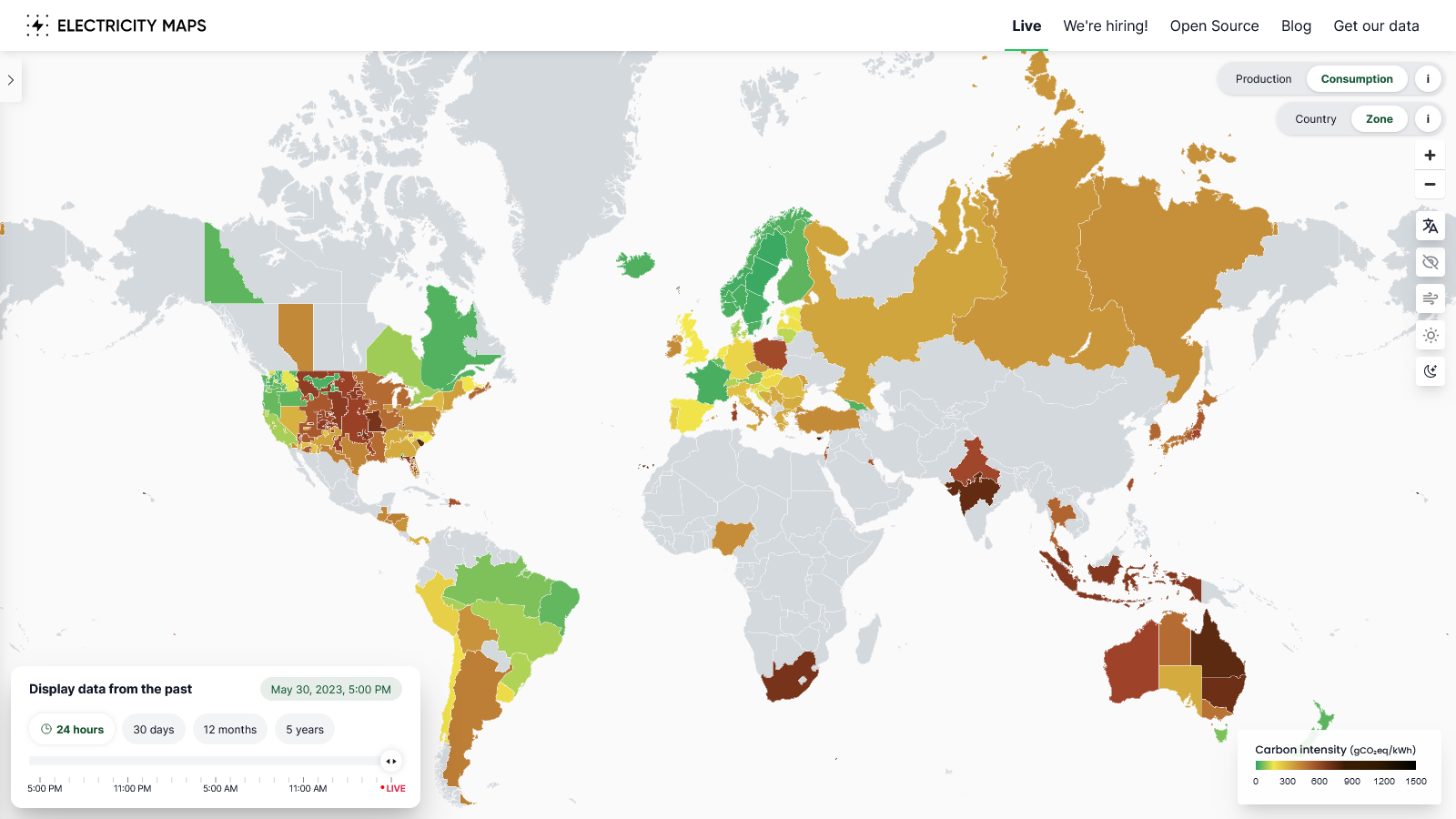

A tool like Electricity Maps, which shows the amount of renewable energy available in any given country, is a valuable resource for making these types of decisions. For example, if you’re a technologist based in Canada, where there aren’t many renewables, sending your workload to run at a data center in Spain, that’s running on cleaner energy, will reduce the software’s carbon footprint. Using a cloud platform from one of the global, hyperscale data companies, like Microsoft, makes this type of coordination possible.

Electricity Maps Dashboard

Temporal Shifting

A quirk of renewable energy is that it fluctuates over the course of the day, depending on whether the sun is shining or the wind is blowing. Industrial-scale batteries can solve this issue, but in most countries, today’s reality is that renewable energy is highly variable. That’s where temporal shifting comes in: by configuring workloads to run during the times when the grid is being powered by renewables (e.g., in the early afternoon when the sun is strongest), you can reduce the carbon-intensiveness of training the model. Because Electricity Maps uses machine learning to be updated in real-time, 24/7, this is also a great tool for scheduling workloads.

This strategy is already being used for charging electric vehicles. Drivers don’t care exactly when their car is charged, as long as it’s topped up in the morning when they need to get to work. So programming the charge to run in the middle of the night, when wind power might be stronger and there’s less overall demand on the grid, can significantly reduce the emissions associated with charging.

Conclusion & Next Steps

Together, these two strategies are known as “demand shifting,” which falls under the larger umbrella of “carbon awareness.” Stated simply, this means programming software to “do more when the electricity is cleaner and do less when the electricity is dirtier.”

This principle can be applied to more workloads than just GenAI, but LLMs are particularly suited to this use case because they need a large amount of computing power but don’t necessarily need to be run at a specific time and place.

A lack of awareness about these ideas can be a barrier to putting these strategies into practice. But having conversations with your project team about them can help to spread the word. In addition, the Green Software Foundation’s Green Software Practitioner Certification is a great way to learn more. This course is a first step toward understanding how greater efficiency can reduce costs, improve performance, and help companies reach their sustainability commitments.